AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Bagging random forest7/30/2023

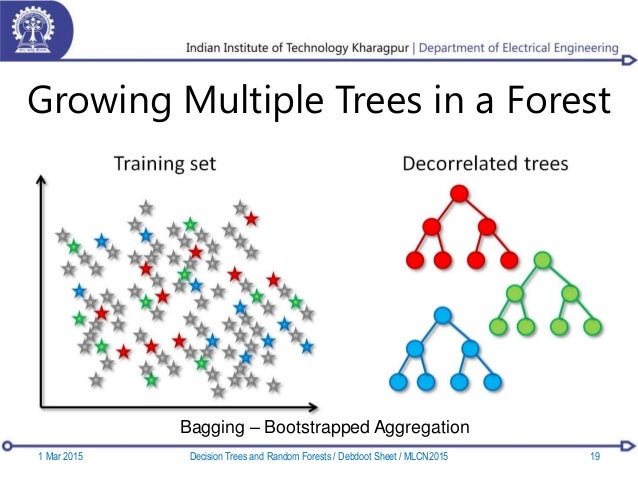

Random Forest is an ensemble technique that combines bagging with decision trees. This technique is known for its ability to handle complex data patterns and produce highly accurate results. Gradient boosting frequently employs decision trees as weak learners and combines their predictions to make the final prediction. It involves building models sequentially, with each subsequent model trained to minimize the errors made by the previous models. Gradient boosting is a specific type of boosting algorithm that uses gradients (partial derivatives) of a loss function to optimize the ensemble of weak models. It is often employed when dealing with complex relationships, imbalanced datasets, or difficult classification tasks. By combining the predictions of all models, boosting aims to reduce bias and improve overall accuracy. Models are trained sequentially, and each subsequent model assigns more weight to the instances that were previously misclassified.

Bagging helps reduce variance, improve stability, and is particularly effective when dealing with high-variance models or datasets.īoosting is an iterative ensemble method that builds a sequence of weak models, each focusing on correcting the mistakes made by its predecessors. The final prediction is typically obtained by averaging or voting on the predictions of individual models. Each model is built using bootstrap sampling, which involves randomly selecting instances with replacement. By doing so, ensemble techniques can compensate for individual model weaknesses, enhance generalization, and provide more reliable predictions.Ĭomparing Bagging, Boosting, Gradient Boosting, and Random Forest:īagging, or bootstrap aggregating, creates an ensemble by training multiple independent models on different subsets of the training data. The idea behind ensemble learning is to leverage the diversity of models and aggregate their predictions to improve overall performance. By the end, you will be equipped with the knowledge to make informed decisions and leverage ensemble learning for achieving outstanding model performance.Įnsemble learning involves combining multiple individual models to create a more robust and accurate predictive model. In this article, we will explore the concept of ensemble learning and compare these four techniques to help you understand their strengths, differences, and applications. Among the ensemble methods, bagging, boosting, gradient boosting, and random forest stand out as influential approaches with unique characteristics. In the realm of machine learning, ensemble learning has become a powerful technique to boost model performance and achieve superior results.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed